By Nils Lenke, VP & GM, Apps

“Are we there yet?” It’s a phrase most parents have heard during road trips with our kids because, let’s face it: car rides can be boring. With autonomous driving on the horizon, this feeling may even eventually extend to the “driver” as their once-active role in the journey transforms.

But rarely have you ever heard a child ask, “Is it over yet?” when enjoying something exciting like a roller coaster or theme park ride. That got us thinking: what if we could turn a car ride into an experience like that? In this blog, we will explore a few steps on the road towards that goal, some of which are already happening today, and some which may need a couple of years to come to fruition.

Step 1: Make the environment around the car accessible to the people in the car.

There is lots going on around the car, and the scene is ever-changing. If only you knew more about all there is to see! Well, what if your car could tell you? Cerence Tour Guide makes that happen by bringing professional, fun information about landmarks and sites directly into the car. We can even make the experience interactive, too, with Cerence Look, which Daimler recently rolled out in their new S-Class as Mercedes-Benz Travel Knowledge. The trick is to have a “digital twin” of the outside environment in the cloud and use it to make all that information accessible via the connected car.

Step 2: Make more use of the windows (and screens).

Today, the windows of a car serve a fairly singular purpose: enabling us to see what is around us. But what if we could give them a bigger role in the driving experience by, for example, overlaying them with additional information and content? Imagine if we were to do this as an augmented reality (AR) overlay, showing information on what you see, or showing how the same scene looked 100 or 200 years ago, maybe during a famous civil war battle or the like? Of course, you can also use the ever-bigger in-car touchscreen for this, combining the feed of a front-facing camera with AR, like we demonstrated in Berlin back in 2019. Adding some fitting background noises – horses, battle cries, etc. if we think of that civil war site example – via the impressive audio systems many cars have these days further extends the immersive experience.

Step 3: Make the car interior and the seat more active.

Car seats are more than just things to sit in, even today: with embedded heating, ventilation, and massage functions, and together with other interior features like ambient lighting, they help drivers relax or be refreshed, and can often be triggered by voice commands like “I’m tired” or “I’m stressed.”

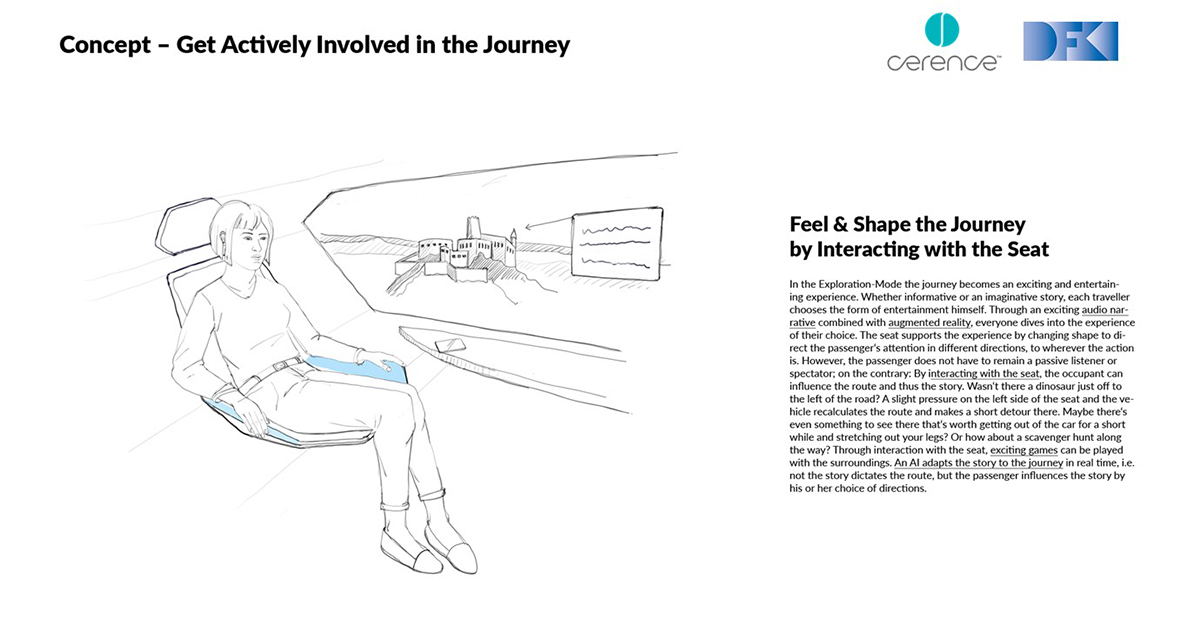

Now imagine if these same features – ambient lighting, AC, seat ventilation, etc. – could be integrated with a multimedia tour. Say our (fictitious) civil war battle happened in winter: brace yourself, the AC and the ventilation will make us suffer the same pains! This brings a whole new level of multi-sensory experience.

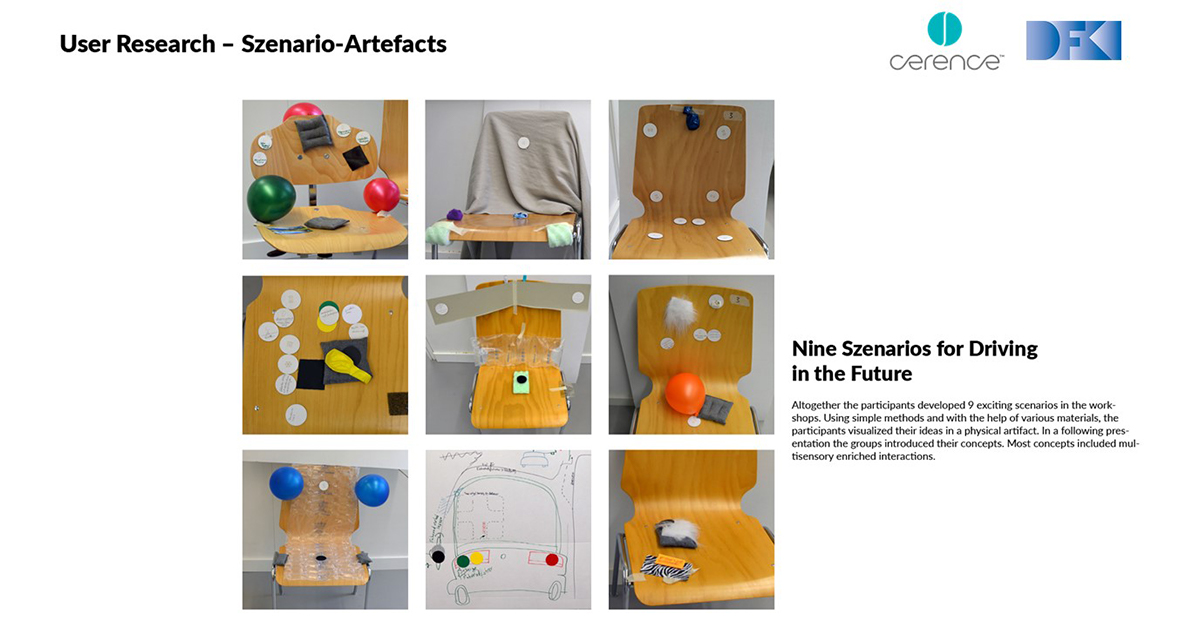

There are many ways the car’s seats can play a bigger role. We recently did a project with the Smart Textile slab of DFKI (Germany’s leading AI Research Institute) to explore some more concepts. Participants in a user focus group were really creative and came up with lots of ideas:

One of the most interesting was to embed actuators into the seat that allow it to change its form and “nudge” the user to pay attention to a certain direction, for example:

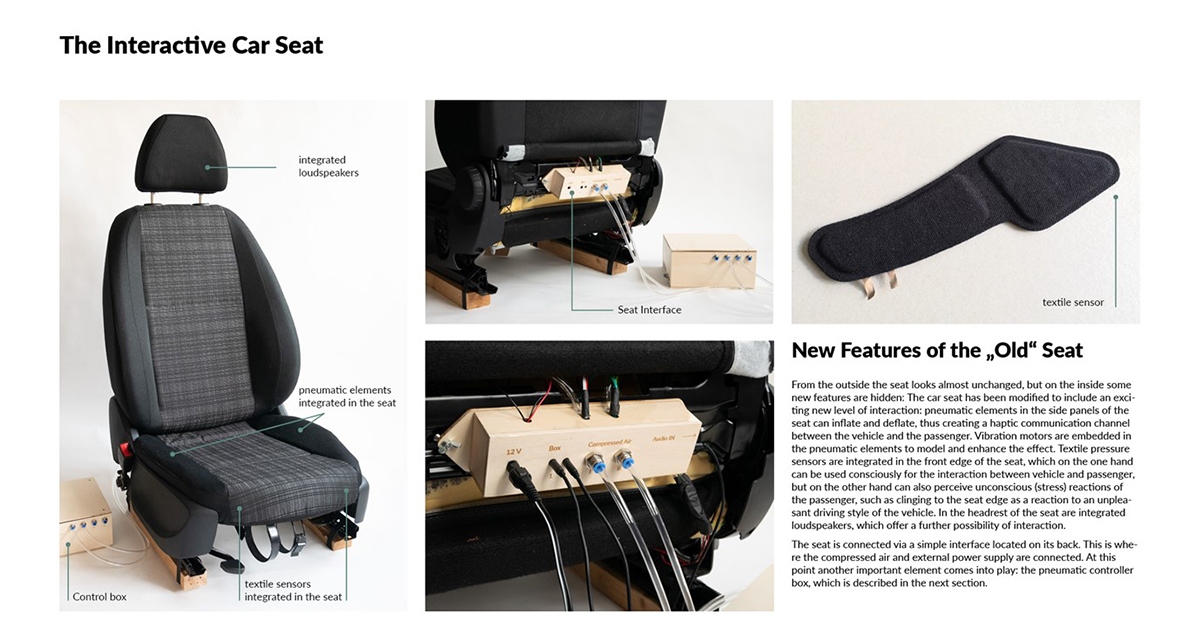

The DFKI team built a working prototype of this feature, combining it with another one: embedding sensors that detect when somebody grabs into the seat, maybe when they feel uncomfortable.

These types of innovations can help make sure we don’t miss anything – and will also help us understand if the experience is at times a little too immersive!

Step 4: Supercharge the environment.

Interacting with the environment, as we saw in step one, is great, but what if we could enhance that experience further? Technologies like 5G will improve localization of cars (beyond what GPS can do) and enable a lot more V2X (Vehicle-to-X) functions. So, what if there were things waiting outside that “fire off” right in the moment the car drives by? Like animated scenes, special billboards, lightening of buildings, sound effects, and lots more. Certain areas could essentially be set up to be the “theatre” for our multimedia tour. Or the whole city can be such an area – and driving will never be boring again!

It’s hard to say when this whole vision will become a reality, but I am sure driving a car – or, as we see increasing levels of autonomous, being driven by a car - in 10 years will be an entirely different experience than what it is today. Some of the technologies mentioned here will certainly play a role in that, and I couldn’t be more excited to see them come to life. To learn more, visit www.cerence.com/cerence-products/cloud-services, and keep up with us on LinkedIn.